Artificial intelligence is shifting out of experimentation into the inner workings of organizations. Firms are implementing AI in the areas of recruiting, financial services, customer service, surveillance, and decision-making processes. With this growing rate of adoption, a new challenge emerges: how to control AI responsibly without decelerating innovation.

Developing a robust AI governance framework has become a key organizational competence. The necessity to have organized control systems that ensure the ethical, transparent, and safe functioning of AI systems is becoming a common concern among regulators, industry organizations, and research centers. Using the OECD structure of trustworthy AI, organizations deploying AI should have accountability structures that define how their systems work, the level of risks involved, and how decisions will be recorded.

Most major organizations have responded by forming internal AI governance systems, sometimes known as AI ethics committees or AI review boards. These committees operate like the institutional review boards employed in research settings, evaluating high-risk AI deployments before they reach production. Research by the World Economic Forum confirms that structured AI governance frameworks enhance transparency and minimize the operational risks of AI adoption.

An AI governance framework is no longer optional for organizations using generative AI, machine learning, or autonomous decision systems. It is an essential part of responsible AI implementation.

This article discusses how companies can create viable AI governance frameworks, establish internal review boards, design approval workflows, and sustain compliance-ready documentation.

Why an AI Governance Framework Must Originate Inside the Organization

For years, AI ethics discussions centered primarily on government regulation. Nonetheless, the accelerated pace of AI maturity means that rules and regulations often lag behind technology implementation. Consequently, companies using AI must assume governance responsibility internally by establishing a formal AI governance framework.

AI systems carry risks that are fundamentally different from those of traditional software. Machine learning models may embed bias, make probabilistic decisions, or change behavior through retraining. These attributes demand oversight mechanisms capable of assessing both technical performance and ethical consequences.

The European Union’s AI Act reflects this movement towards organized control. The legislation categorizes AI systems by risk level and mandates that organizations deploying high-risk AI maintain detailed documentation, human supervisory processes, and transparency. Similar governance expectations are emerging worldwide.

Internal governance systems enable organizations to be proactive rather than reactive. Instead of responding to regulatory questions after the fact, firms can implement an AI governance framework that evaluates AI systems before deployment. Governance committees assess potential harms, validate training data integrity, and ensure that systems align with organizational policies.

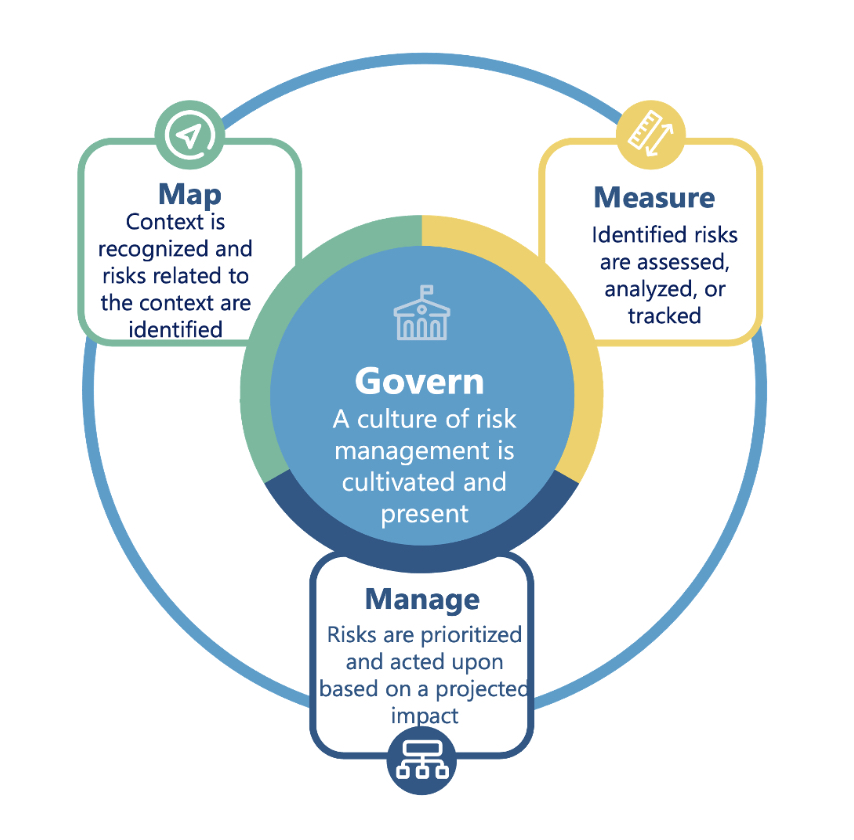

Findings from the National Institute of Standards and Technology emphasize that effective AI governance requires properly organized risk management procedures integrated into the work of the organization. By incorporating governance into the development process, organizations can manage risks while continuing to innovate.

Here, an AI governance framework cannot be seen merely as a compliance practice but as a strategic capability that facilitates sustainable AI adoption.

Designing Internal AI Review Boards

The establishment of internal AI review boards is one of the most effective governance tools available. These boards serve as evaluation groups that assess proposed AI applications before they enter the production environment.

An AI review board typically includes representatives from multiple disciplines: machine learning engineers, legal professionals, compliance officers, and business executives. This multidisciplinary composition ensures that both technical and ethical considerations are addressed during the approval process.

The Partnership on AI recommends that organizations establish cross-functional oversight bodies capable of reviewing AI development practices, assessing potential societal impacts, and ensuring that development decisions remain transparent.

An AI review board does not aim to block innovation. Instead, it provides a systematic review. Teams proposing AI deployments submit documentation covering the model’s purpose, training data sources, performance measures, and potential risks. The board then evaluates these materials to determine whether the system meets the standards defined in the organization’s AI governance framework.

In practice, review boards typically examine whether a model introduces bias, whether decisions are explainable, and whether a human-in-the-loop mechanism exists for high-stakes decisions. By establishing such committees, organizations create a standardized method of assessing AI deployments, reducing ad-hoc decision-making, and ensuring that technology development is guided by ethical principles.

Approval Workflows and Model Documentation

An effective AI governance framework depends heavily on structured approval workflows. These workflows define how AI projects progress toward implementation and ensure proper controls are applied at every stage.

A typical governance model includes multiple checkpoints throughout the AI lifecycle. At the design stage, teams document the intended use case and potential risks. During development, they track model performance and training data provenance. Before deployment, the system undergoes formal review by the governance board.

The model cards introduced by researchers at Google offer a practical example of organized documentation. Model cards summarize a model’s purpose, training data, evaluation metrics, and known limitations, giving stakeholders a clear understanding of how the system operates.

Transparency across the organization is also supported by thorough documentation. Compliance teams, engineers, and managers need to understand how models function so they can accurately assess risks. Without documentation, governance breaks down because decision-makers lack visibility into system behavior.

Project management tools and AI development platforms are increasingly integrating governance workflows into their ecosystems. Automated approval pipelines ensure that no model reaches production without completing the required review processes. This approach embeds the AI governance framework directly into the development cycle rather than treating it as an external checkpoint.

Compliance, Audit Trails, and Continuous Monitoring

AI systems require continuous monitoring and reporting even after implementation. A comprehensive AI governance framework must include mechanisms for maintaining audit trails and tracking model performance over time.

Audit trails document critical events during the AI lifecycle, including model updates, retraining occurrences, and governance approvals. These records provide transparency and enable organizations to demonstrate regulatory compliance during audits.

The NIST AI Risk Management Framework highlights the need for continuous monitoring to ensure that AI systems behave as expected once deployed. Models can drift as data patterns evolve, making ongoing supervision essential.

Global compliance requirements are also expanding. Laws increasingly require organizations to document the decision-making processes of AI systems, especially in high-sensitivity domains such as finance, healthcare, and recruitment. Maintaining thorough governance records means organizations can account for their AI systems when called upon to do so.

From an operational perspective, a well-implemented AI governance framework also serves as a safeguard against reputational risk. High-profile examples of AI failures, such as bias in hiring algorithms or discriminatory credit scoring, have demonstrated that poorly governed AI can erode public trust. Robust governance systems ensure these risks are identified and addressed before they escalate.

An AI Governance Framework as the Foundation of Responsible AI

As AI becomes deeply embedded in organizational processes, a well-defined AI governance framework will become as essential as cybersecurity policies or financial management controls. Firms deploying AI without structured oversight face regulatory problems, reputational risks, and operational failures.

The pillars of an effective AI governance framework are internal AI review boards, well-organized approval workflows, detailed model documentation, and audit-ready monitoring systems. Together, these mechanisms ensure that AI systems operate responsibly while continuing to deliver business value.

At Creative Bits AI, we collaborate with organizations to develop AI governance frameworks that integrate smoothly into existing business processes. Implementing AI successfully demands more than technical expertise; it requires governance systems that balance innovation with responsibility. The most successful organizations of the future will not merely build powerful AI systems. They will build governance frameworks capable of managing them.