AI product cost is one of the most underestimated challenges organizations face when scaling artificial intelligence beyond pilot programs. Many leaders enter AI adoption expecting fully scalable, frictionless intelligence — only to discover that every AI-powered chatbot, document automation system, or recommendation engine carries significant and ongoing operational expenses. These are not one-time software purchases; they are living computational systems with costs that evolve as usage grows.

Once an organization moves past the pilot phase, a critical question emerges: How much should I budget for an AI solution? According to the McKinsey Global AI Survey 2024, 65% of organizations now leverage generative AI in at least one function — yet cost predictability and ROI remain the top concerns during scaling. This challenge frequently stems from focusing solely on API pricing while overlooking hidden cost layers, including latency, retries, storage, monitoring, and model fine-tuning.

At Creative Bits AI, we apply an engineering approach to AI cost modeling. Understanding token economics, model selection trade-offs, and infrastructure multipliers forms the foundation of any financially sustainable AI solution.

1. AI Product Cost Starts With Token Economics: The Unit Cost That Scales Exponentially

At the core of most modern AI systems lies token-based pricing. Large language models such as GPT-4o, Claude, and Gemini charge per token — units of text processed as input and generated as output. OpenAI’s pricing model clearly demonstrates that different models vary significantly in token cost depending on the capability tier. Consequently, even a small increase in average prompt length, conversation history retention, or output verbosity can dramatically increase monthly spend.

To illustrate, consider an AI customer support assistant processing 100,000 conversations per month. If each interaction averages 2,000 input tokens and 800 output tokens, a marginal 20% increase in verbosity adds millions of tokens monthly. At scale, this becomes a material financial variable. Anthropic’s documentation similarly highlights that longer context windows — while powerful — increase inference costs when organizations fail to manage them strategically.

Why Token Economics Directly Shapes AI Product Cost

Token economics extends well beyond price per thousand tokens. It includes:

- Context retention strategy

- Prompt optimization

- Memory pruning

- Retrieval augmentation efficiency

Organizations that neglect prompt structure routinely overpay by 30–50% due to bloated system messages and redundant history storage. Therefore, engineering efficient prompts is not merely performance tuning — it is cost governance.

At Creative Bits AI, we implement token budgeting frameworks during system design. Specifically, each workflow carries defined maximum token thresholds and dynamic truncation policies to prevent uncontrolled cost growth.

2. Model Selection Trade-Offs: How Capability, Cost, and Latency Affect AI Product Cost

Not all AI tasks require frontier models. In fact, one of the most expensive mistakes organizations make is deploying top-tier reasoning models for tasks that simpler models handle equally well. Google Cloud’s AI infrastructure documentation emphasizes that workload-model alignment is critical to maintaining predictable cost structures. While high-capacity models excel at complex reasoning and synthesis, tasks such as classification, tagging, routing, or extraction often perform just as effectively on smaller or fine-tuned models.

Furthermore, latency is a financial variable that organizations frequently overlook. A model that takes two seconds longer per request degrades customer experience and demands higher concurrency provisioning. According to Microsoft Azure’s AI performance guidance, model latency directly impacts infrastructure scaling requirements and user retention metrics.

The Three Variables That Determine AI Product Cost in Model Selection

Model selection involves three interlocking variables:

- Capability determines output quality.

- Cost determines operational sustainability.

- Latency determines real-world usability.

Organizations must therefore calculate marginal benefit per dollar spent. In many production systems, hybrid architectures emerge naturally — lightweight models handle routine tasks, while advanced models activate selectively for edge cases.

At Creative Bits AI, we routinely design routing layers that intelligently switch models based on complexity scoring. As a result, inference costs decrease without any compromise to output quality.

3. Infrastructure and Compute: The Hidden Multipliers in AI Product Cost

API cost represents only one layer of the AI cost stack. Infrastructure overhead, moreover, frequently becomes the silent multiplier that catches organizations off guard.

Storage costs accumulate through conversation logs, embeddings, vector databases, and backup systems. AWS pricing documentation shows that even moderate-scale storage with high read/write frequency generates noticeable operational expense. Additionally, inference computing introduces further cost when organizations host custom or open-source models. NVIDIA’s 2024 AI deployment insights highlight that GPU provisioning and energy consumption significantly impact the total cost of ownership for self-hosted AI systems.

Additional Infrastructure Costs That Compound AI Product Cost

Beyond storage and compute, organizations must also budget for:

- Monitoring and logging

- Prompt version control systems

- Failover redundancy

- DevOps engineering time

- Security audits

The IBM 2024 Cost of a Data Breach Report reinforces that AI systems handling sensitive data must incorporate governance and security monitoring — a financial layer that early-stage budgets consistently ignore.

As a result, when organizations move from prototype to production, indirect infrastructure costs frequently exceed raw model costs.

At Creative Bits AI, we conduct full-stack AI cost audits before deployment. Infrastructure, observability, compliance, and scaling elasticity all integrate into the financial forecast — not as afterthoughts, but as foundational design requirements.

4. Fine-Tuning, RAG, and the Long-Term AI Product Cost Structure

Many enterprises attempt to control AI product cost through fine-tuning or retrieval-augmented generation (RAG). However, both strategies shift the cost structure rather than eliminate it.

How Fine-Tuning Affects AI Product Cost

Fine-tuning introduces several ongoing expenses:

- Training compute cost

- Dataset curation labor

- Ongoing retraining cycles

- Version management overhead

OpenAI’s fine-tuning documentation outlines both the advantages and the additional operational requirements organizations must maintain for tuned models.

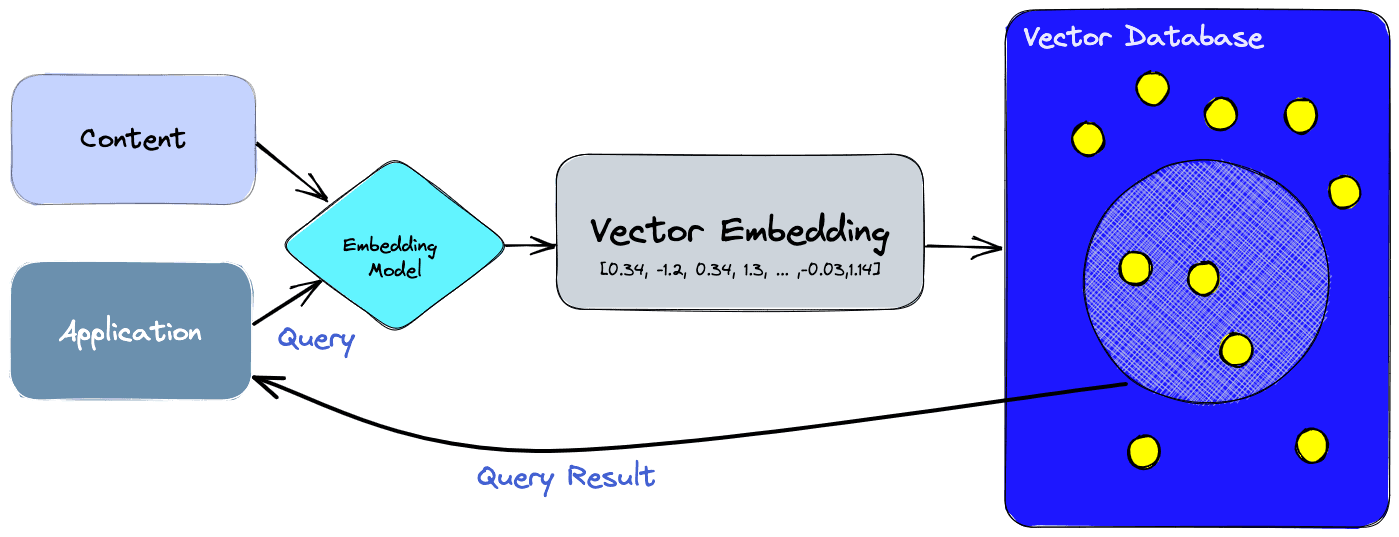

How RAG Architectures Redistribute AI Product Cost

RAG architectures reduce hallucination effectively — but they introduce storage, indexing, and retrieval costs in return. Pinecone’s architecture documentation demonstrates that vector databases require scalable infrastructure and active monitoring to maintain performance under load.

Moreover, latency becomes a hidden cost in RAG systems. Each retrieval call adds milliseconds that compound across multi-step workflows. The common misconception is that RAG is cheaper than deploying larger models. In reality, RAG shifts expenditure toward storage, compute orchestration, and ongoing maintenance.

The true AI product cost is therefore not linear — it is layered, compounding, and deeply architecture-dependent.

Engineering Cost-Aware AI Products at CBAI

AI products are not purely technical implementations — their economic frameworks define their long-term viability. Token consumption scales with adoption. Model selection dictates response times and concurrency capacity. Infrastructure compounds base costs. Fine-tuning and retrieval introduce ongoing maintenance obligations.

Organizations that treat AI applications as simple black-box APIs accumulate costs rapidly and unpredictably. Those that design architecture with cost visibility, by contrast, achieve predictable and sustainable growth.

At Creative Bits AI, financial architecture is embedded into every AI product we build. Token optimization strategies, intelligent model routing, elastic infrastructure design, and real-time cost visibility dashboards all combine to deliver AI systems that are not only high-performing but financially engineered for scale.

AI innovation without financial discipline is not sustainable. If your organization is developing an AI product and needs guidance on token economics, model selection, or infrastructure planning, contact us at Creative Bits AI. Together, we build intelligent, scalable, and cost-aware systems that deliver measurable business value.